Detecting simple sentiment (happy/sad) is easy. Detecting whether a complex conversation followed a strict 50-page rulebook is hard.

In any scenario requiring strict adherence to protocol—whether it’s an astronaut pre-flight check or a customer support script—a generic “Did they do a good job?” prompt fails. It lacks precision.

This post details how we engineered a Structured Compliance Engine using a custom compressed prompt format to achieve high-reliability auditing.

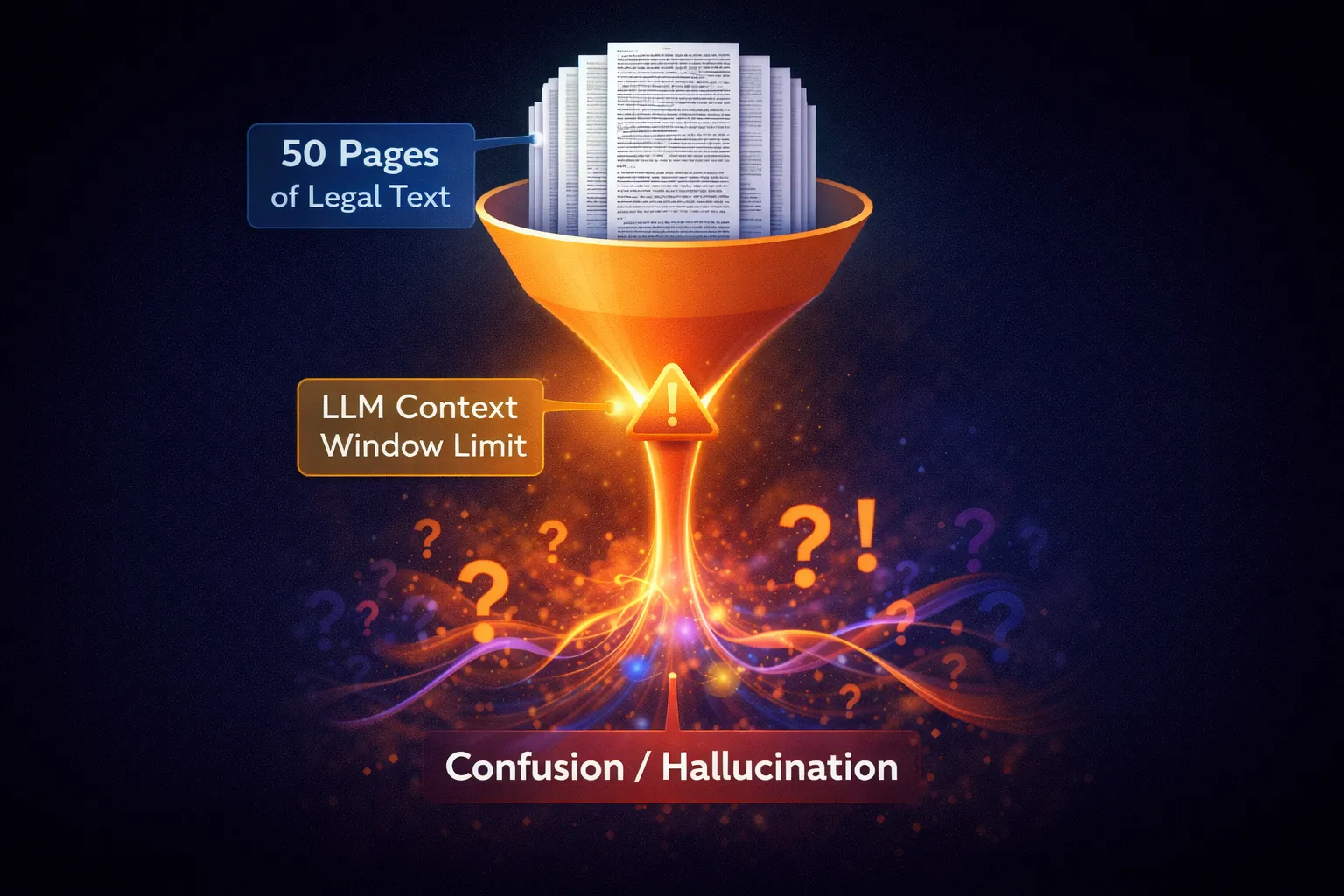

The Challenge: The “Hallucinating Auditor”

We faced three common problems when using LLMs for rigid auditing:

- Context Overflow: Sending an entire policy manual blows up the token limit.

- Subjectivity: The AI might forgive a critical error because the speaker was “polite.”

- False Positives: Flagging a minor stumble as a catastrophic failure.

The Solution: ‘logic-Compression’

Instead of pasting pages of legalese, we devised a method to compress rules into a density that LLMs process more effectively. We call this “Optimized-Rule-Set” format.

Before vs. After (The “Pizza Shop” Example)

Imagine you are auditing a pizza order taker.

- The Policy PDF: “It is strictly mandatory that every employee, when answering the phone, must explicitly state the current special offer of two-for-one pepperoni slices, unless it is a Tuesday…” (Verbose, confusing).

- Compressed Format:

RULE: "Upsell_Special" | MANDATORY: "mention 2-for-1 slices" | EXCEPTION: "Tuesday" | SEVERITY: "MEDIUM"(Concise, logic-focused).

def build_audit_prompt(conversation_text, rule_definitions):

"""

Constructs a highly structured audit prompt using the Compressed Logic format.

"""

optimized_rules = convert_to_compressed_format(rule_definitions)

prompt = f"""

You are a **Protocol Compliance Auditor**.

**🔴 THE PROTOCOL:**

1. If an action is NOT explicitly prohibited, it is ALLOWED.

2. Ambiguous cases must be marked as 'WARNING' only.

3. You must cite specific evidence for every flag.

**THE RULES (Compressed Format):**

{optimized_rules}

Analyze the interaction below.

"""

return promptThe “Chain of Thought” Logic

One of the biggest breakthroughs was forcing the model to categorize “Training Gaps” vs. “Critical Failures.”

We codified this directly into the system prompt.

"""

**EVALUATION LOGIC:**

1. **Step 1: Check for Training Gaps**

- Was this a minor slip-up (e.g., stuttering, forgot a greeting)?

- If YES → Mark as 'COACHING'. Do NOT fail the audit.

2. **Step 2: Check for Critical Failures**

- DId the user violate a strict safety/legal prohibition?

- If YES → Mark as 'FAILURE'.

3. **Step 3: Verification**

- Can you quote the exact line where this happened?

- If NO → Do NOT flag.

"""Structured Output for Automation

We don’t want a paragraph of text back. We need structured data.

{

"audit_results": [

{

"category": "Protocol_Violation",

"evidence": "User failed to confirm launch sequence.",

"timestamp": "04:23",

"confidence_score": 0.98,

"severity": "CRITICAL"

}

],

"training_notes": [

{

"category": "Communication",

"observation": "User spoke too quickly.",

"suggestion": "Slow down for clarity.",

"confidence_score": 0.85

}

]

}Why This Matters

By treating the LLM logic like code—with strict “If/Else” instructions in the prompt—we reduced false positives significantly. The “Compressed” format keeps our token costs low, while the strict separation of Coaching vs. Failures ensures the system is fair.

We turned an LLM from a “creative writer” into a “strict auditor.”